The AI Revolution Is Real. The Timeline Is Wrong

TL;DR: AI intelligence is plateauing.

The models are smart enough to be useful, but each gain of intelligence comes at an increasingly high cost. Yes, there is an AI revolution but the revolution is neither sudden nor about superintelligence.

I argue that AI will follow a pattern similar to that of electricity and the internet. There will be a buildout to integrate, redesign, and implement. The market seems to be pricing a two-year timeline for this work, when it will realistically take a decade.

In short, the market is pricing the wrong timeline.

The Internet, and now AI, follow the same pattern. Of course, it won't take 40 years to fully play out, but it's also unrealistic to imagine that thousands of rules, relationships, processes, and regulations will be replaced in a year or two. Especially considering that the AI intelligence will likely not improve much further.

The intelligence plateau

In 2024-early 2025, the core technology, large language models (LLMs), reached a level of intelligence that made it useful for production. Since then, raw intelligence gains have slowed.

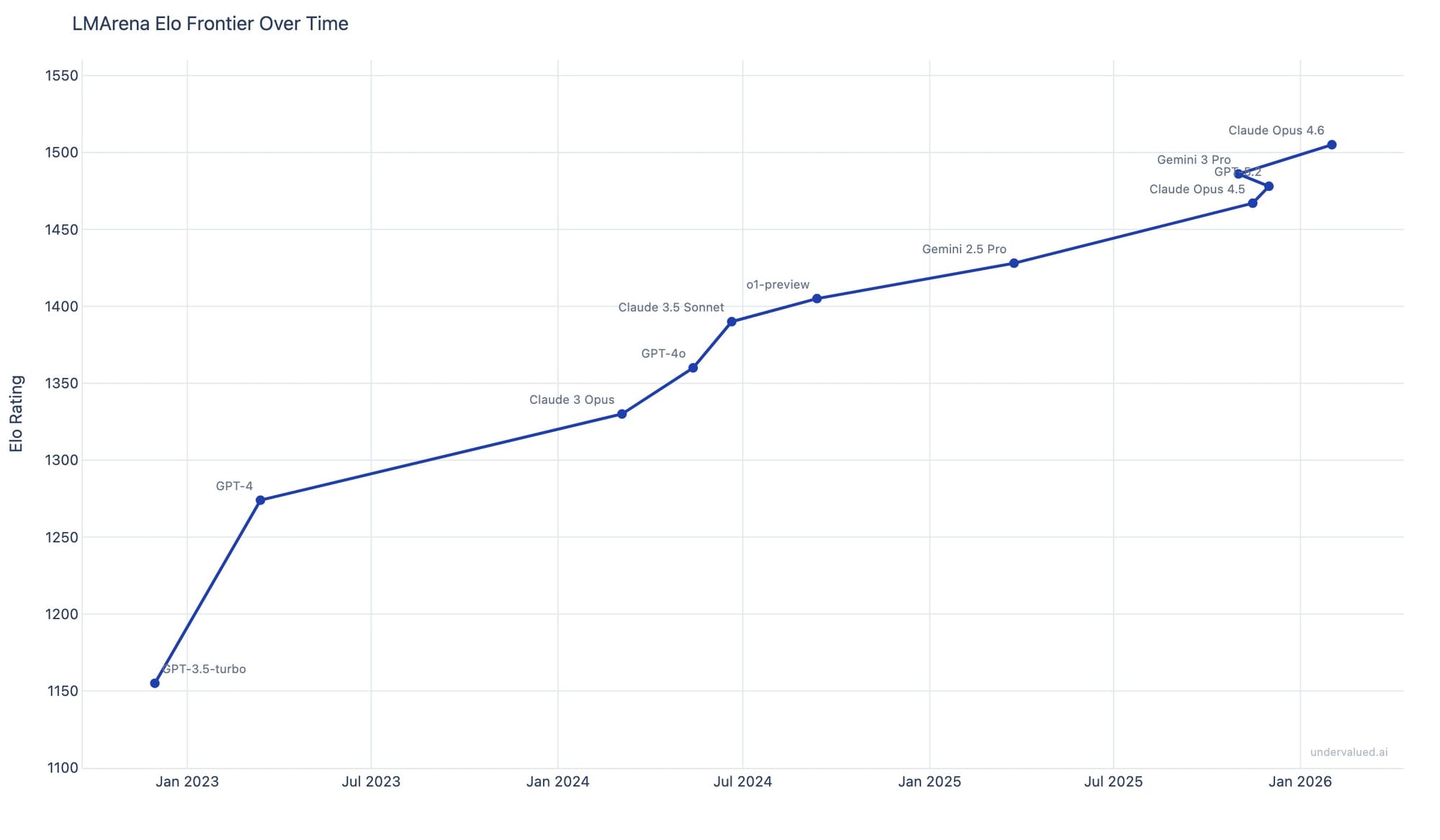

In the industry, AI intelligence is actually measured with Elo ratings. It works similarly to a tournament. The outputs are compared by human evaluators, and the model preferred by reviewers climbs the rankings. Moving forward, we used data coming from LMArena (formerly Chatbot Arena).

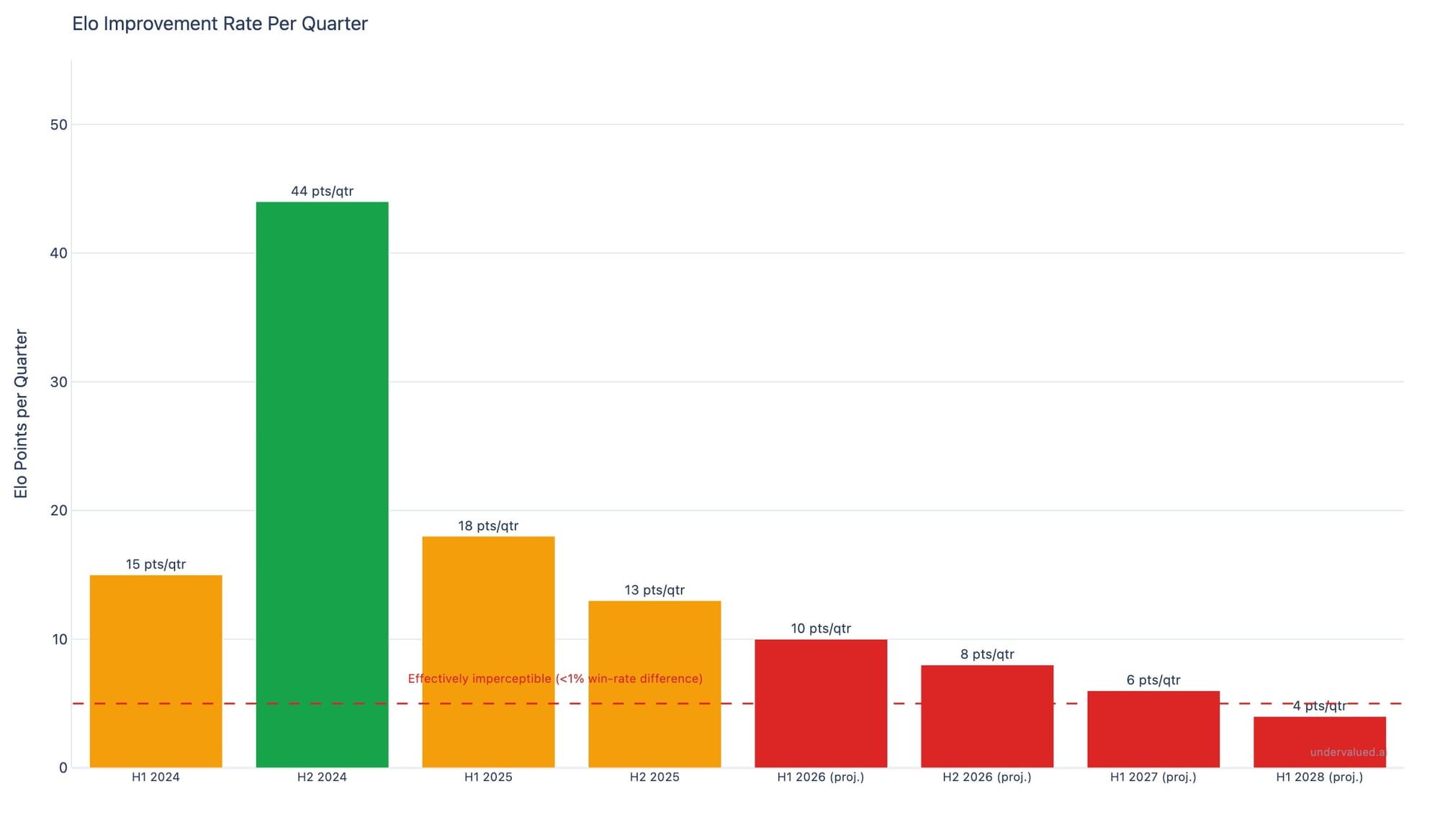

As you can see with the chart below, in early 2025, during the first wave of reasoning models like o1 and Gemini 2.5 Pro, Elo was growing at ~44 points per quarter. By late 2025, the rate dropped to ~18. Today, in February 2026, it's closer to 13. We can also note that the top models are all within 40 Elo points of each other.

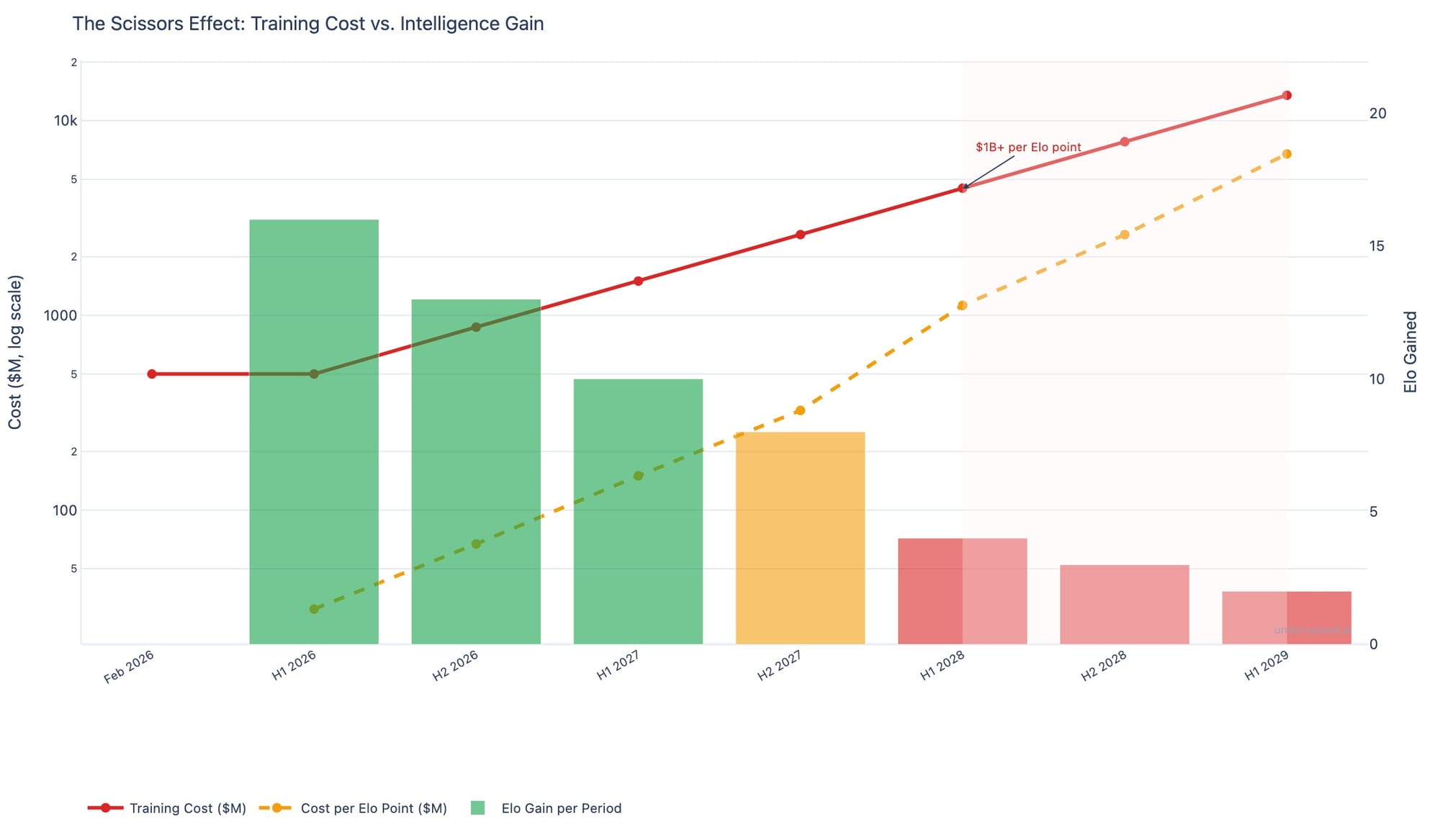

The second graph above illustrates this plateauing effect even more. We projected the trend over the next two years and unless training costs decrease significantly, this projection will hold because of economics.

Historically, training costs grew and are planned to keep growing at 2.4x per year (Epoch AI's analysis of frontier models since 2016). Assuming that a frontier model today costs roughly $500 million to train (reference), then a simple multiplication shows that it will cost over $1.2 billion to train a new model by early 2027. $1.2 billion for an expected improvement of 6 Elo points. By H1 2028, that number crosses $3 billion ($500M x 2.4^2), meaning it would cost $700 million to gain a single Elo point.

Will it be worth it economically when users can barely distinguish between models separated by 10 Elo points?

Although we can find conflicting opinions, it is interesting to note that researchers developing this technology increasingly say the same. Sutskever told NeurIPS that pre-training, the way we know it, will end. LeCun calls LLMs "a distraction, a dead end" on the road to human-level AI. Even Amodei, CEO of Anthropic, who has the most to gain from continued scaling, acknowledges multiple distinct walls for different capabilities.

The brick is good enough

So if intelligence is plateauing, then why does AI feel so much more capable every few months?

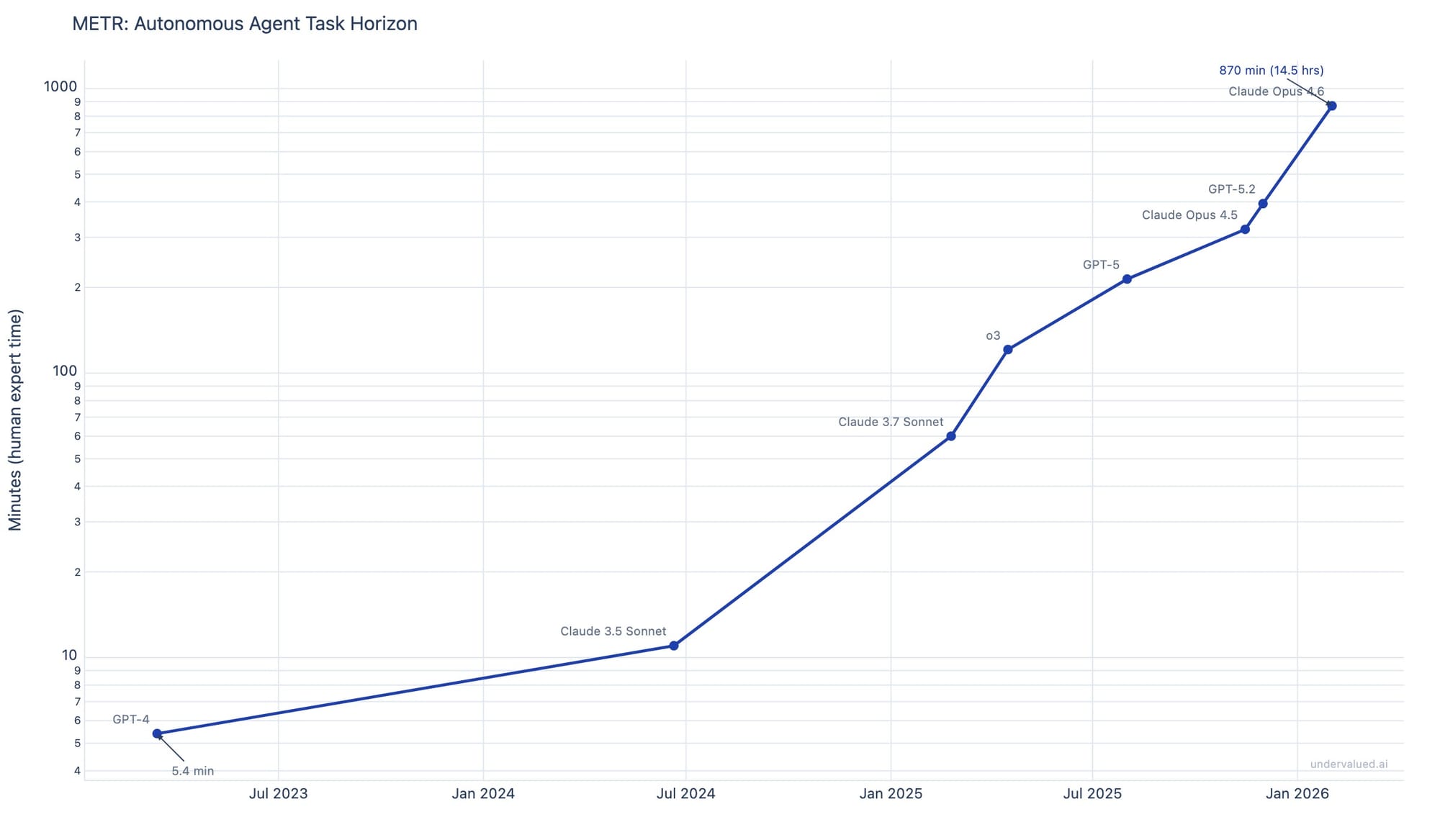

When we measure how long an AI agent can work on a task before it fails, we see an exponential improvement, and we are quick to misread this as the AI being more intelligent.

METR, an organization that tracks AI capability measured in March 2023 that GPT-4 could handle tasks that take a human expert approximately 5 minutes. As of February 2026, Claude Opus 4.6 handles tasks that would take 870 minutes, so about 15 hours of uninterrupted work!

The reason for this exponential improvement comes from the system around the LLMs, not the LLMs themselves. Think of it as a system using many LLMs that is designed in a way that LLMs review one another, can spawn themselves, use tools like searching the web or querying a database, communicate, and share memory. The LLMs are not much more intelligent; we 'simply' built a system that amplifies their power. It's brilliant, but it's not a breakthrough in AI intelligence.

In short, we built infrastructures that use AI agents as bricks, but the bricks - although intelligent bricks - are still bricks.

As you can see, this system allowed a 160x improvement in task duration (870 / 5.4 minutes), while intelligence as measured by Elo improved roughly 18% over the same period (from ~1274 to ~1505).

By combining those intelligent bricks, we made them more capable and more useful, and it is remarkable, but the intelligence beneath them is reaching its limits.

That being said, the models understand more than skeptics give them credit for. In a famous experiment, researchers trained a GPT model on Othello move sequences alone: no board, no rules, no images. The model spontaneously built an internal representation of the game board, because modeling it was the most efficient way to predict the next move. And Anthropic confirmed this at scale (reference).

So there is real intelligence, although of a different form than ours.

There are other capability improvements that we can expect. Back in 2024, OpenAI's o-series proved that "thinking longer" improved the quality of output and could allow smaller models to perform as well as larger ones, provided we give them enough compute during inference. There is also the quantisation of larger models, which 'compresses' them into smaller ones, improving cost and latency dramatically at the expense of some accuracy. This might open the door to a new AI-natively embedded product, but it does not change the intelligence plateau.

In short, the brick is good enough. The value creation has shifted to whoever builds the best structures around it.

The brick is also a commodity

This insight is what proponents of the AI bubble rely on.

It was illustrated on January 27, 2025, when DeepSeek (a Chinese lab funded by a quantitative hedge fund) released a reasoning model built on a base model that was claimed to have cost $5.6 million in training, versus $40-100 million for comparable Western models. Even at $50 million (assuming the reported figure is understated), DeepSeek caught up on a decade of Western AI research at a fraction of the budget. In one day, over $1 trillion in market capitalization vanished from tech stocks.

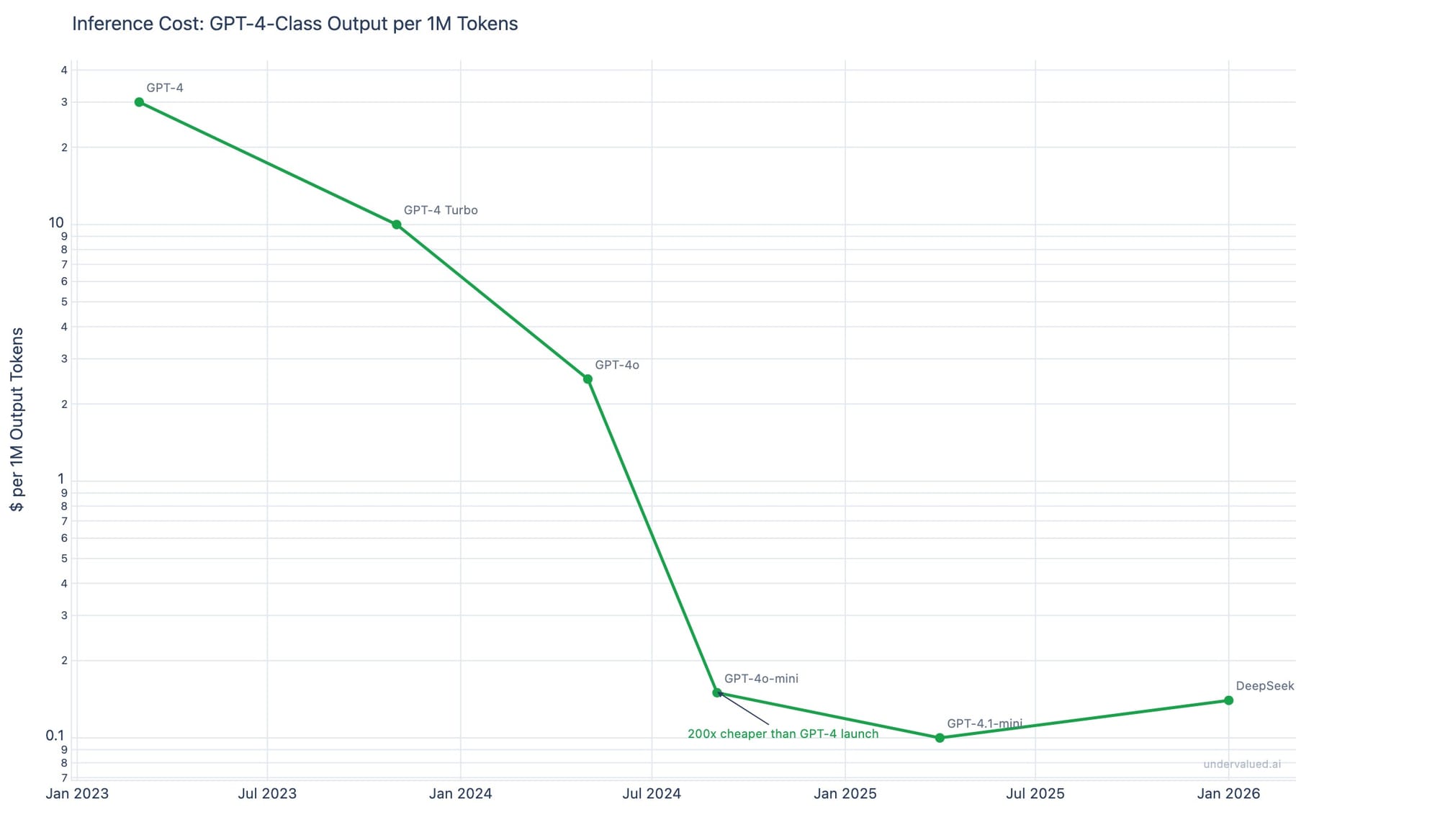

The market recovered within weeks, but the lesson remains that this intelligence brick is converging into becoming a commodity. Today, with techniques like mixture of experts, the need to have the latest training data, and other improvements, models become obsolete within 12 months as the next generation's budget buys more capability and the previous generation is commoditized. And this timeframe to obsolescence will keep on narrowing.

Meanwhile, inference costs have fallen roughly 200x over the past 3 years. GPT-4-class output that costs $30 for one million output tokens costs $0.15 today (GPT-4o-mini at a similar level of performance).

When a technology becomes both powerful enough and cheap enough, the value shifts from producing it to deploying it and this is where existing players have an advantage.

Why SaaS is not dead

Throughout 2025 and early 2026, the "SaaS is dead" narrative led to a massive selloff in software stocks. The [BVP Emerging Cloud Index](https://www.bvp.com/bvp-nasdaq-emerging-cloud-index) fell over 40% from its 2024 peak. As an example, Adobe and Salesforce saw sharp declines despite stable revenue growth.

The underlying thesis seems to be: AI agents will replace enterprise software entirely.

And that thesis misunderstands how enterprises actually adopt technology.

When 10,000 employees use an advanced software system with proprietary data, every person has built their daily workflow around it. Their habits and the cognitive familiarity. The switching cost must consider the productivity collapse of an entire organization that will have to unlearn how they work and relearning something new. Why would the CTO take that risk if the current system is not failing?

And these systems are not failing. They work.

What's more, enterprise SaaS sales is not only about price and capability. There is also a trust component especially when it comes to intellectual property and sensitive data. There is a need for personalisation to adapt to the unique workflow of this company. All barriers that an AI first company will not surmount only by plugging agents together.

Also, the incumbents can simply add the capabilities to their stack as feature and are arguably better positioned because they already have the distribution, the user base, and the API surface. ServiceNow acquiring Moveworks for $2.85 billion is exactly this: the existing player absorbs the AI capability.

Remove-and-replace only works when the AI alternative is so much better that the ROI is immediate and the migration is simple. This will likely be the case for consumer software with low switching costs, but most enterprise softwares do not fall in this category.

As a result, I don't see AI replacing enterprise Saas and the selloff looks like an overreaction. Just like I see an overreaction with AI infrastructure expectations.

The Cisco parallel

The infrastructure companies (Nvidia, Broadcom, the data center REITs, etc.) face a different problem: they are priced for perpetual acceleration.

Nvidia's data center revenue reached $167 billion on a trailing twelve-month basis as of Q3 FY2026. The capital expenditure commitments from the largest cloud providers (the "hyperscalers") for 2025 alone are close to $320 billion and for 2026 are projected to be in the $500-700 billion range.

In their June 2024 report "Gen AI: Too Much Spend, Too Little Benefit?", Goldman Sachs calculated that companies would need to generate over $600 billion in new annual revenue from AI to justify the cumulative infrastructure investment. For perspective: the entire global advertising industry crossed $1 trillion in total revenue for the first time in 2024. Alphabet, the largest advertising company in history, captured roughly $265 billion of that. The AI revenue target requires creating new demand at a scale without historical precedent. The market is priced as if this target, or something close, will be reached. It's an ambitious, highly optimistic, target. This situation - high capex spending, high expectations priced in, and questionable path to achieving it, brings parallel with Cisco.

In 2000, Cisco was the world's most valuable company and the largest infrastructure provider for the internet. Revenue was growing over 50% per year, and the stock traded at over 100x forward earnings. Then telecom capex collapsed and Cisco fell 89% from its peak. The hyperscalers are not Cisco. The AI revolution is real but so was the internet. The question is simply: Are we pricing the buildout speed correctly?

So what's next?

Electricity took 40 years to reshape manufacturing; the internet took 20 to reshape commerce; and AI will reshape knowledge work on a shorter timeline, but it will likely be measured in multiple years or decades, not quarters.

The era of dramatic leaps in AI intelligence is likely behind us. From here, the gains come from integration. Change will come and will be major, but it will be around infrastructure. And it will be existing large players with deep customer relationships and embedded workflows, who will capture most of the value.

As for AI providers, I believe that in the next 12 months, the marketing will shift from "our model is smarter" to "our model or our product is cheaper, more reliable, and better integrated with your workflows."

At that point, the narrative will change from "intelligence" to efficiency and reliability. And this will lead the market to reprice the narrative.

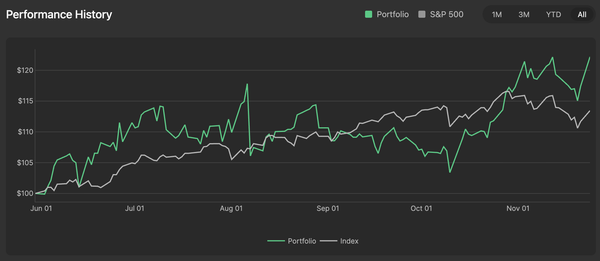

* The AI hedge fund that has been live since February 2025 and has been trading real capital since January 2026. The performance and the trades are shared transparently on undervalued.ai

This article is for informational purposes only and does not constitute investment advice.